TL;DR

Discover how to use the connected components algorithm to unify user aliases in a single table, enabling seamless tracking and analysis across all datasets. Treat this playbook as an implementation path for agentic analytics: design the AI data analyst workflow around trusted SQL and a trusted semantic layer so answers stay explainable, reusable, and safe for business decisions.

Treat this playbook as an implementation path for agentic analytics: design the AI data analyst workflow around trusted SQL and a trusted semantic layer so answers stay explainable, reusable, and safe for business decisions.

Why User Identification is Complex in B2C Companies?

User identification in data is a recurring problem for warehouse-first B2C companies. A single user can be identified with multiple aliases in the datasets:

✅ user_id - ID after sign-in✅ device_id - Mobile or desktop device's ID✅ anonymous_id - Tracking cookie✅ customer_id - Stripe✅ contact_id - Hubspot✅ email - Used for sign-in... and many more.

The Risks of Using a Single Identifier for Users

Picking a single identifier for user identification is risky. You may end up:❌ not being able to use that identifier for all your users❌ multiple identifiers for the same user

Retroactive User Recognition: Merging IDs for Accurate Tracking

ℹ️ The correct solution is to merge all these IDs into a single merged ID and create a user alias table where all aliases are associated with their merged IDs.

This approach is called retroactive user recognition and it simplifies data modelling significantly. You won't have to think about which ID to use in joins. You can safely use the merged ID once they are backfilled to the all tables.

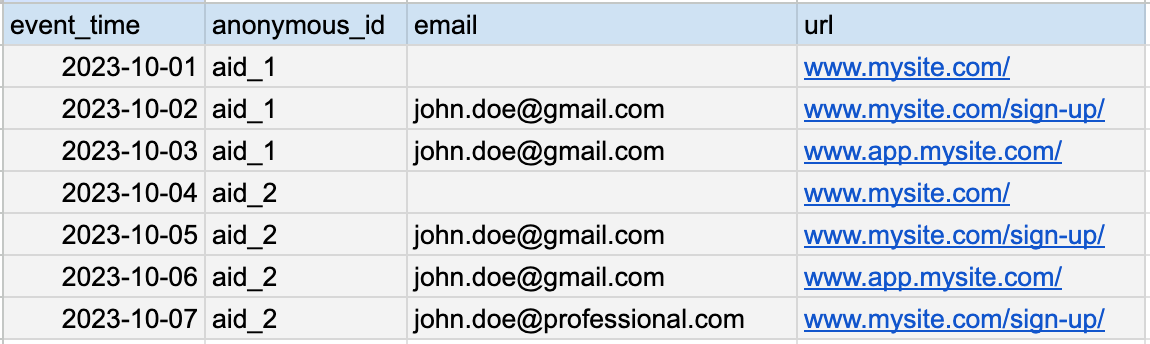

Example: How to Handle Multiple User Aliases in Clickstream Data

It is the simplest to explain this challenge with an example. Imagine you have a dataset that contains page visits of users.

It has four columns:

- event_time

- anonymous_id

- url

The anonymous_id is always filled from the browser's cookie. However, it changes if the browser cookie is refreshed. The email is filled once the user logs into the application. However, it changes if the user logs in with another email.

Now, you need to answer a simple question:

Creating a User Alias Table: Simplifying Data Modeling with Merged IDs

As you can see, no single column can uniquely identify the user. In fact, all of its anonymous_ids and emails identify the users. The anonymous_id and the emails are only aliases for the same user. What you need is a user_alias table that contains all aliases.

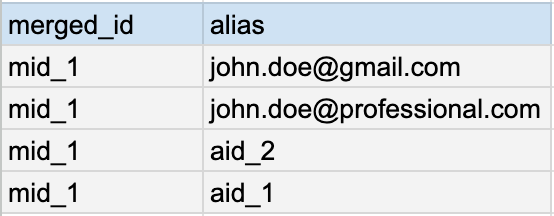

The alias table is the association of all the users' aliases to a single merged_id that can be used to track that user across all datasets.

Creating and backfilling all aliases where it is missing is the process of retroactive user recognition.

Why Building a User Alias Table is More Than Just SQL Joins?

Creating the user aliases table is way more complex than you think. Most people approach it with some special joins and group bys. However, building that alias table is impossible with only simple joins and group bys with SQL.

Why? Because the problem of user aliases is, in fact, a graph problem.

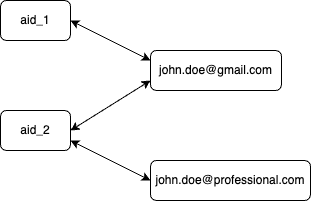

User with anonymous_id aid_1 signs in with email (john.doe@gmail.com). The browser clears the cookie at some point, so the user is also signed out. The user signs in again while having the new cookie. At another point, the user switches to the company email (john.doe@professional.com), which is typical for SaaS businesses.

We are still talking about the same user who has four aliases.

Here is the change graph of alias associations for our user:

As you can see, none of the anonymous_ids or emails are an excellent identifier for the user. We need to define a new ID uniquely associated with the user. The best way to do this is to have the whole graph as the source of the unique ID.

Once we have a unique ID, we can backfill it to all events in the table and be able to support the two original requirements.

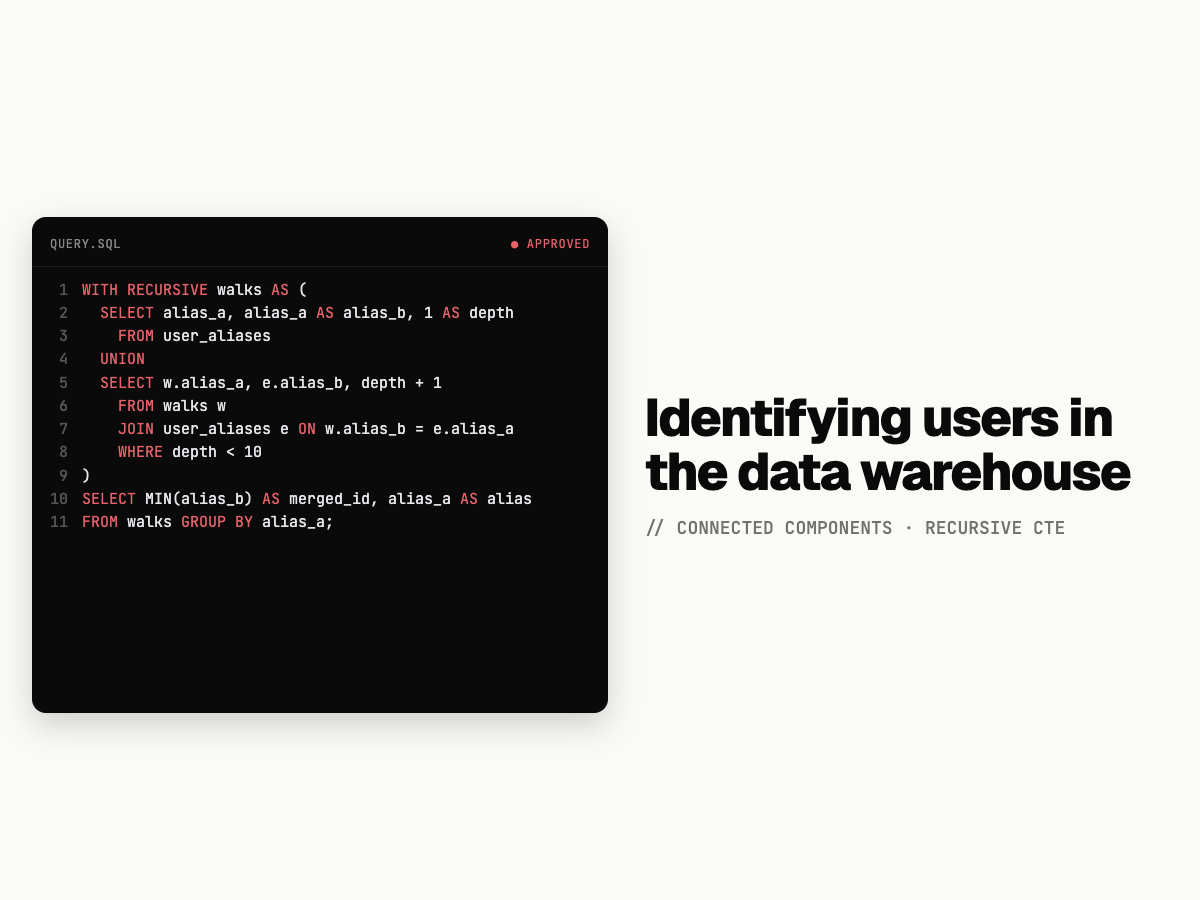

Walking the Graph: Using Recursive CTEs to Map User Aliases

To map out all the aliases, we must walk through all edges in this graph. Graph walking in SQL is only possible to do with recursive CTEs. In this example we are using Postgres SQL, with minor changes this SQL can ported to all SQL dialects.

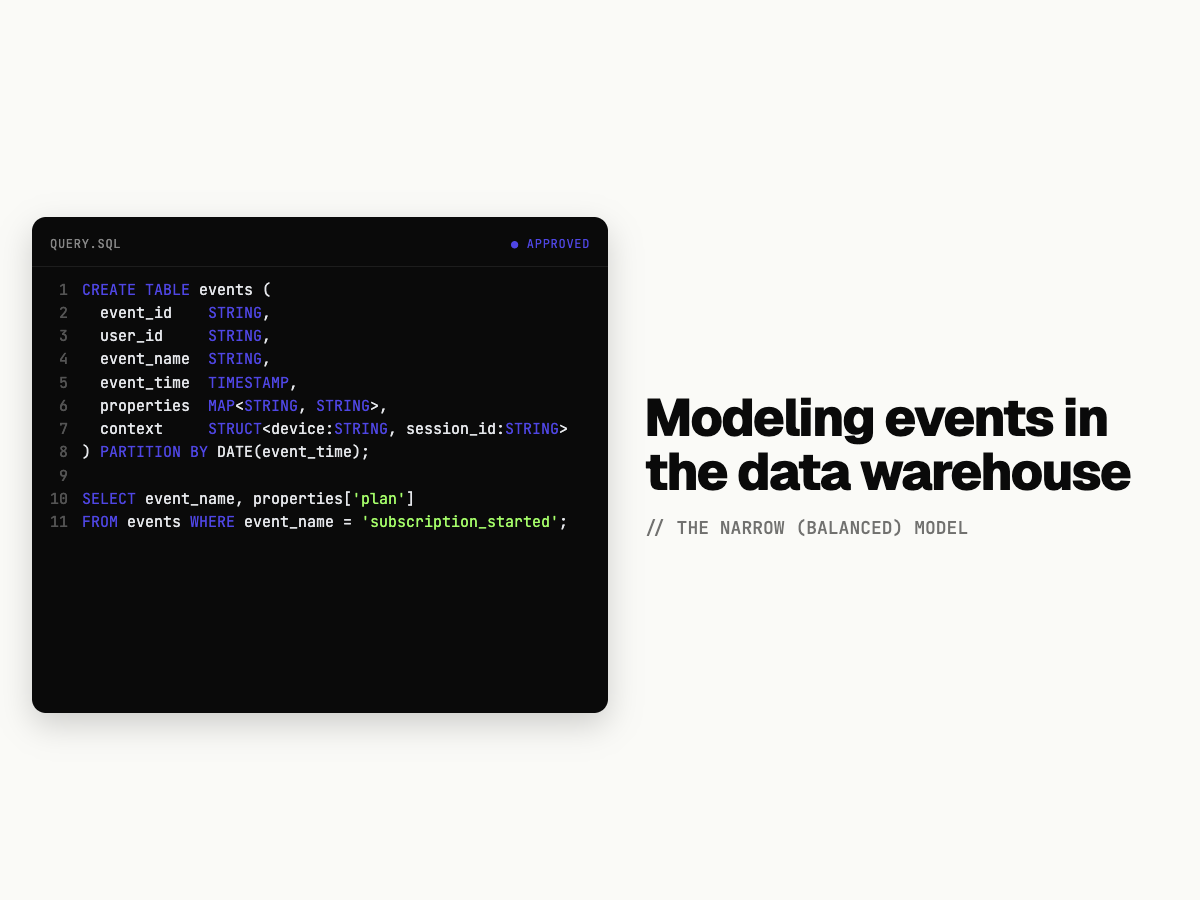

First, we need to define the edges:

create table default.user_aliases as

WITH RECURSIVE

-- collecting the graph edges

edges as (

select

anonymous_id as a,

coalesce(email, anonymous_id) as b -- making sure users without sign up are also included

from page_visits

),

-- making sure the graph is bidirectional

bidirectional as (

select a,

b

from edges

union all

select b as a,

a as b

from edges

)create table default.user_aliases as

WITH RECURSIVE

-- collecting the graph edges

edges as (

select

anonymous_id as a,

coalesce(email, anonymous_id) as b -- making sure users without sign up are also included

from page_visits

),

-- making sure the graph is bidirectional

bidirectional as (

select a,

b

from edges

union all

select b as a,

a as b

from edges

)-- The connected components algorithm in SQL:

-- walking the graph to collect all aliases

walks AS (

SELECT

a as a,

a as b,

1 as depth

FROM bidirectional

UNION

SELECT w.a,

e.b,

depth + 1 as depth

FROM walks w

JOIN bidirectional e ON w.b = e.a

and not (

e.a = w.a

and e.b = w.b

)

and not (

e.b = w.a

and e.a = w.b

)

-- Termination condition

where depth < 10

)create table default.user_aliases as

WITH RECURSIVE

-- collecting the graph edges

edges as (

select

anonymous_id as a,

coalesce(email, anonymous_id) as b -- making sure users without sign up are also included

from page_visits

),

-- making sure the graph is bidirectional

bidirectional as (

select a,

b

from edges

union all

select b as a,

a as b

from edges

),

-- The connected components algorithm in SQL:

-- walking the graph to collect all aliases

walks AS (

SELECT

a as a,

a as b,

1 as depth

FROM bidirectional

UNION

SELECT w.a,

e.b,

depth + 1 as depth

FROM walks w

JOIN bidirectional e ON w.b = e.a

and not (

e.a = w.a

and e.b = w.b

)

and not (

e.b = w.a

and e.a = w.b

)

-- Termination condition

where depth < 10

)

-- picking the merged_id for all aliases

select

min(b) merged_id,

a as alias

from walks

group by 2create table default.user_aliases as

WITH RECURSIVE

edges as (

select

anonymous_id as a,

coalesce(email, anonymous_id) as b

from page_visits

union all

select

contat_id as a,

email as b

from hubspot_contacts -- extending with Hubspot

union all

select

customer_id as a,

email as b

from stripe_customers -- extending with Stripe

),

bidirectional as (

select a,

b

from edges

union all

select b as a,

a as b

from edges

),

walks AS (

SELECT

a as a,

a as b,

1 as depth

FROM bidirectional

UNION

SELECT w.a,

e.b,

depth + 1 as depth

FROM walks w

JOIN bidirectional e ON w.b = e.a

and not (

e.a = w.a

and e.b = w.b

)

and not (

e.b = w.a

and e.a = w.b

)

-- Termination condition

where depth < 10

)

select

min(b) merged_id,

a as alias

from walks

group by 2Handling Anonymous-to-Known Transitions

Most product journeys start anonymously and become known only at sign-up or login. If you do not stitch these identities, pre-signup behavior is separated from post-signup behavior and funnels under-report activation. In practice, that means product teams optimize with partial user journeys.

Two patterns are common in warehouse pipelines. The first is an alias event that records anonymous_id -> user_id at authentication time. The second is a dedicated lookup table maintained by ingestion jobs or identity workflows. Both can work well if they are consistently backfilled into historical events.

UPDATE analytics.events e

SET user_id = a.user_id

FROM analytics.identity_aliases a

WHERE e.user_id IS NULL

AND e.anonymous_id = a.anonymous_id

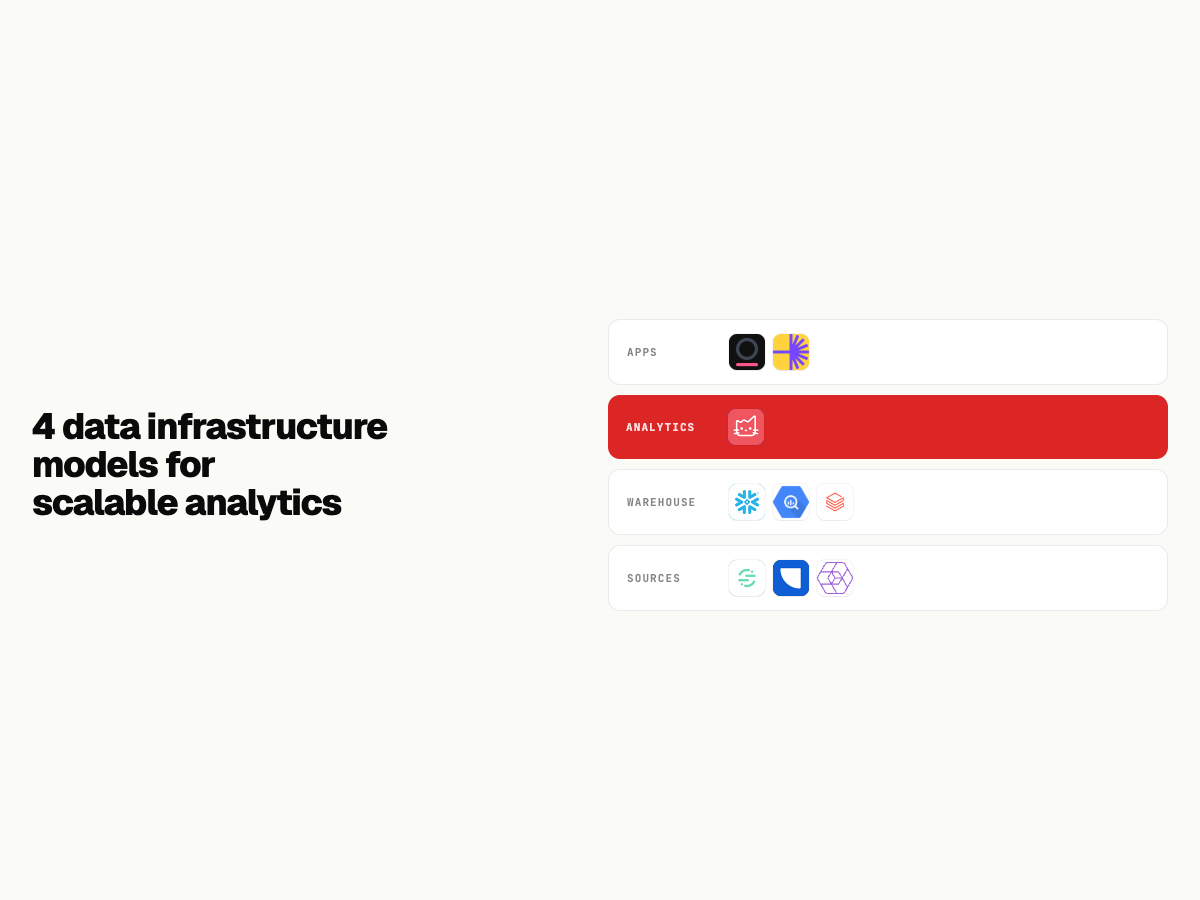

AND e.event_time <= a.alias_created_atIdentity in a Warehouse-Native Analytics Tool

Warehouse-native analytics platforms like Mitzu can apply identity resolution at query time instead of requiring a single pre-joined event table for every use case. In Mitzu's event model, you can define both user_id and anonymous_id columns and let generated SQL apply stitching logic consistently across funnels, retention, and segmentation.

This approach keeps lineage clear: raw events remain raw, identity logic is explicit, and analysts can inspect the SQL path used for each chart. It also reduces maintenance burden when identity policies evolve, because teams update one modeled logic path rather than rebuilding many downstream marts.

Testing Your Identity Resolution

Before trusting stitched metrics, validate coverage and quality. Start by checking what percentage of sessions resolve to a stable user_id after authentication. Then test for invalid one-to-many mappings and ensure alias capture covers the vast majority of sign-up flows.

SELECT

COUNT(*) AS sessions_total,

SUM(CASE WHEN resolved_user_id IS NOT NULL THEN 1 ELSE 0 END) AS sessions_resolved,

ROUND(

100.0 * SUM(CASE WHEN resolved_user_id IS NOT NULL THEN 1 ELSE 0 END) / COUNT(*),

2

) AS resolved_pct

FROM analytics.session_summarySELECT anonymous_id, COUNT(DISTINCT user_id) AS mapped_users

FROM analytics.identity_aliases

GROUP BY 1

HAVING COUNT(DISTINCT user_id) > 1SELECT

ROUND(

100.0 * SUM(CASE WHEN has_alias_event THEN 1 ELSE 0 END) / COUNT(*),

2

) AS signup_alias_coverage_pct

FROM analytics.signup_flowsConclusion

Robust identity resolution in the warehouse is the foundation of accurate product analytics. Once anonymous and known journeys are stitched reliably, every funnel, retention cohort, and behavioral segment becomes more trustworthy. For related implementation patterns, see Mitzu resources on event modeling and user ID stitching.

FAQ

What is the difference between anonymous_id and user_id in event tracking?

anonymous_id identifies pre-login behavior, usually at browser or device scope, while user_id identifies an authenticated account. A single person can generate many anonymous_ids across devices or cookie resets. Identity stitching links these IDs so analytics reflects full user journeys instead of fragmented sessions.

How should I handle users who log in across multiple devices?

Use an alias mapping that can connect multiple anonymous_ids to one stable user_id over time. This can be done with explicit alias events or a mapping table maintained by your identity pipeline. The key is to make stitching deterministic and consistently applied in all analytical queries.

Should identity resolution happen at ingestion time or at query time?

Both are valid. Ingestion-time stitching can speed common queries, while query-time stitching keeps raw data intact and makes identity logic easier to evolve. Many warehouse-native teams use a hybrid model: materialize core identity tables and still keep query-time flexibility in analytics tools.

What identity quality threshold should teams aim for?

A practical target is covering more than 95% of sign-up flows with alias mappings and keeping duplicate assignments close to zero. Teams should monitor coverage as a recurring data quality KPI rather than a one-time migration check. If coverage drops, expect immediate distortion in activation and retention metrics.